Algorithm efficiency

The efficiency of an algorithm is mainly measured by time complexity and space complexity. Time complexity describes the computational time required to run an algorithm for a set of input elements. It is generally expressed as a function of the size of the input.

Asymptotic notations

Three types of asymptotic notations are used to express both the time complexity and space complexity of an algorithm. We will compare these notations by three different scenarios of the same algorithm, the linear search algorithm. Suppose we have a list of n integer values, and we need to find the index of the first occurrence of x in this list. If x is not found, then we need to return -1. Now we are going to describe the three types of asymptotic notations for this linear search algorithm.

- Big O notation

(O): It describes the worst-case scenario for an algorithm. In the linear search algorithm, the worst-case occurs when the searched number is not found in the list. So, the worst-case performance of linear search isO(n). - Omega notation

(Ω): It describes the best-case scenario for an algorithm. The best-case in the linear search algorithm occurs when the searched number is found at the list’s first index. Thus, the best-case performance of linear search isΩ(1). - Theta notation

(θ): It describes the average-case scenario for an algorithm. In an average case, the performance of linear search isθ(n/2).

Time complexity calculation

Generally, we use the big O notation O(...) to denote an algorithm’s time complexity, where ... represents a function. This function may contain the term n, which implies the size of the input elements. Some examples of the big O notation are: O(n), O(nlogn), O(2n), etc. The time complexity of an algorithm is commonly estimated by counting the fundamental operations performed by the algorithm.

Counting loops

Loops are the main components in time complexity calculation. An algorithm with a single loop iterating through all n input elements has a time complexity of O(n). If it contains k nested loops iterating through all n input elements, the time complexity will be O(nk).

The following code snippet with a single loop has a time complexity of O(n):

for(int i=1; i<=n; i++)

{

// Time complexity: O(n)

}

The following code snippet with a two levels nested loop has a time complexity of O(n2):

for(int i=1; i<=n; i++)

{

for(int j=1; j<=n; j++)

{

// Time complexity: O(n^2)

}

}

Similarly, the following code snippet with a three levels nested loop has a time complexity of O(n3):

for(int i=1; i<=n; i++)

{

for(int j=1; j<=n; j++)

{

for(int k=1; k<=n; k++)

{

// Time complexity: O(n^3)

}

}

}

Order of magnitude

The exact number of iterations is not reflected in the big O notation while calculating the time complexity.

The following code snippet with a single loop with 5*n+3 iterations has a time complexity of O(n):

for(int i=1; i<=5*n+3; i++)

{

// Time complexity: O(n)

}

For another example, the following code snippet has a time complexity of O(n2):

for(int i=1; i<=n-1; i++)

{

for(int j=i+1; j<=n; j++)

{

// Time complexity: O(n^2)

}

}

Similarly, the following code snippet has a time complexity of O(n3):

for(int i=1; i<=n-2; i++)

{

for(int j=i+1; j<=n-1; j++)

{

for(int k=j+1; k<=n; k++)

{

// Time complexity: O(n^3)

}

}

}

Maximum complexity

If an algorithm has several loops with individual time complexity of O(n), O(n2), and O(n3), then the time complexity of the algorithm is O(n3).

For example, the following code snippet has a time complexity of O(n3):

for(int i=1; i<=5*n+3; i++)

{

// Time complexity: O(n)

}

for(int i=1; i<=n-1; i++)

{

for(int j=i+1; j<=n; j++)

{

// Time complexity: O(n^2)

}

}

for(int i=1; i<=n-2; i++)

{

for(int j=i+1; j<=n-1; j++)

{

for(int k=j+1; k<=n; k++)

{

// Time complexity: O(n^3)

}

}

}

Express complexity with factors

The time complexity of an algorithm can depend on several factors. In this case, the time complexity notation contains several variables.

For example, traversing a matrix with n rows and m columns has a time complexity of O(n*m):

for(int i=1; i<=n; i++)

{

for(int j=1; j<=m; j++)

{

// Time complexity: O(n*m)

}

}

Common classes of time complexity

The following table contains common classes which are used to analyze the time complexity of an algorithm. The table is listed in increasing order of time complexity.

| Notation | Name | Description | Example |

|---|---|---|---|

| O(1) | constant | independent of the size of the input data | # accessing the n’th element of an array # arithmetic operation # push and pop operation of a fixed size stack # accessing a key value of the hashtable # comparison |

| O(logn) | logarithmic | # half the input size at each step # log2n is the number of times n should be divided by 2 to become 1 | binary search |

| O(n) | linear | iterate the input elements for a constant number of times | # linear search # calculate the sum of an array # finding the minimum or maximum value of an array |

| O(nlogn) | linearithmic, loglinear | # generally it indicates that the algorithm sorts the input elements # usage a data structure in which each operation takes log n time | # merge sort # heap sort |

| O(n2) | quadratic | # contains two nested loops # iterating all pairs of input elements | # bubble sort # selection sort # insertion sort # the sum of two-dimensional array |

| O(n3) | cubic | # contains three nested loops # iterating all triplets of input elements | Naive multiplication of two n×n matrices |

| O(2n) | exponential | # doubles the growth rate in each addition to the input elements n# iterates all subsets of the input elements | finding all subsets of a given array |

| O(n!) | factorial | generally it indicates that the algorithm iterates through all permutations of the input elements | finding all permutations of a given array |

A polynomial algorithm has a time complexity of O(nk), where k is a constant. All the above notations except O(2n) and O(n!) indicate polynomial algorithm.

Efficient algorithm selection

Knowing the time complexity and maximum input size can help determine if an algorithm can solve a problem within a time limit. Suppose we are given a problem with an input size n = 105 and a time limit of 1 second. If we select an algorithm with O(n2) time complexity, it will require 1052 = 1010 operations. Using an O(n2) algorithm, we cannot solve the problem within the time limit. To solve the problem within the given limit, we need to use an algorithm with O(nlogn) or O(n) time complexity.

The following table contains the maximum input size to solve a problem within one second for several common time complexity classes:

| Notation | Maximum input size n for 1s time limit |

|---|---|

| O(n!) | n <= 10 |

| O(2n) | n <= 20 |

| O(n3) | n <= 500 |

| O(n2) | n <= 5000 |

| O(nlogn) or O(n) | n <= 106 |

| O(1) or O(logn) | n > 106 |

References:

- Data Structure Asymptotic Notation

- Linear Search - Hackerearth

- Linaer Search - Wikipedia

- Big O notation - Wikipedia

- Time complexity - Wikipedia

- 8 time complexities that every programmer should know

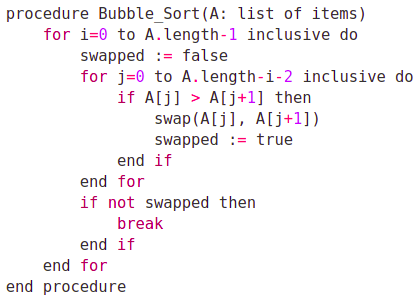

- Bubble sort

- Insertion sort

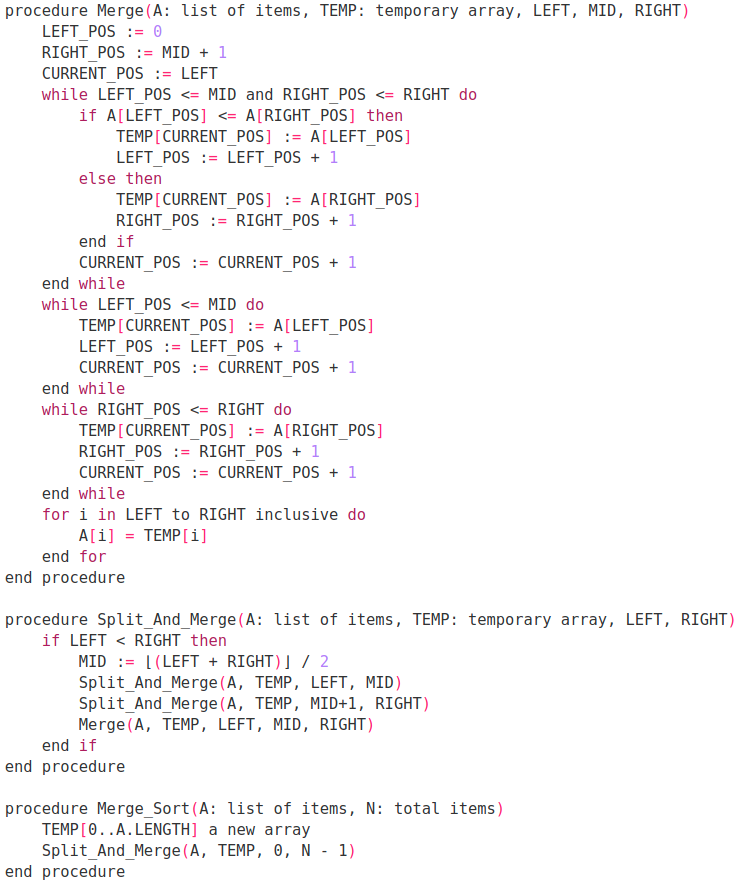

- Merge sort

- Counting sort

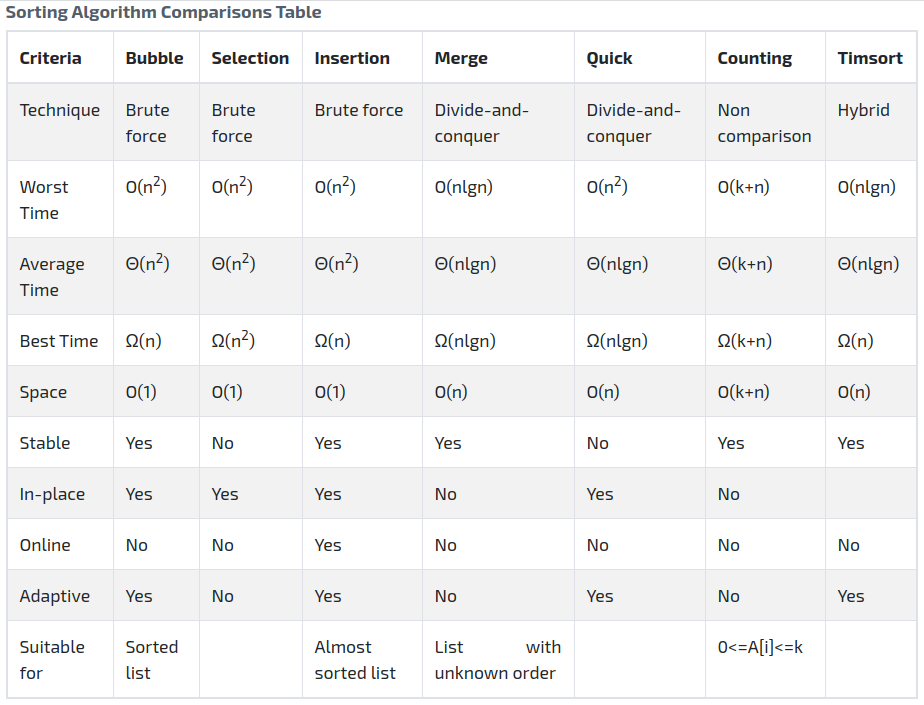

- Sorting Algorithm Comparisons

- Competitive Programmer’s Handbook

- Introduction to algorithms by Cormen, Thomas H and Leiserson, Charles E and Rivest, Ronald L and Stein, Clifford

Advertisement